Vision Systems Design reports on a recent paper by Ankit Gupta and Brian Curless (UW CSE) and Robert T. Held and Maneesh Agrawala (UC Berkeley), “3D Puppetry: A Kinect-based Interface for 3D Animation.”

Vision Systems Design reports on a recent paper by Ankit Gupta and Brian Curless (UW CSE) and Robert T. Held and Maneesh Agrawala (UC Berkeley), “3D Puppetry: A Kinect-based Interface for 3D Animation.”

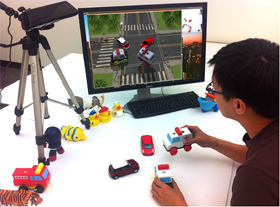

“Researchers at the University of Washington (Seattle, WA, USA) and the University of California, Berkeley (Berkeley, CA, USA) have created a system that can enable even inexperienced puppeteers to produce 3-D animations.

“To use the system, a puppeteer physically manipulates objects in front of a Kinect depth sensor. The system then uses a combination of image-feature matching and 3-D shape matching to identify and track the objects. It then renders the corresponding 3-D models into a virtual set.

“The system operates in real time so that a puppeteer can immediately see the resulting animation and make adjustments on the fly. It also provides 6-D virtual camera and lighting controls, which the puppeteer can adjust before, during, or after a performance. Layered animations can be used to help puppeteers produce animations in which several characters move at the same time.”

Read the post here. Read the technical paper here. Watch the cool video here.