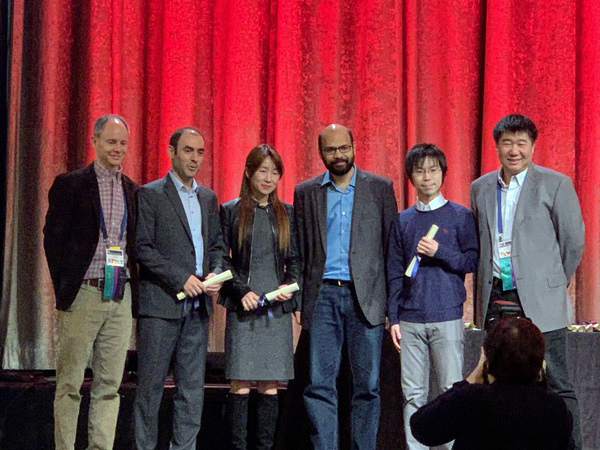

Allen School professor Yejin Choi and her colleagues Keisuke Sakaguchi, Ronan Le Bras and Chandra Bhagavatula at the Allen Institute for Artificial Intelligence (AI2) recently took home the Outstanding Paper Award from the 34th Conference of the Association for the Advancement of Artificial Intelligence (AAAI–20). The winning paper, “WinoGrande: An Adversarial Winograd Schema Challenge at Scale,” introduces new techniques for systematic bias reduction in machine learning datasets to more accurately assess the capabilities of state-of-the-art neural models.

Datasets like the Winograd Schema Challenge (WSC) are used to measure neural models’ ability to exercise common-sense reasoning. They do this by testing whether they can correctly discern the meaning of pronouns used in sentences describing social or physical relationships between entities or objects based on contextual clues. These clues tend to be easy for humans to comprehend but pose a challenge for machines. The models are fed pairs of nearly identical sentences that primarily differ by a “trigger” word, which flips the meaning of the sentence by changing the noun to which the pronoun refers. A high score on the test suggests that a model has achieved a level of natural language understanding that goes beyond mere recognition of statistical patterns to a more human-like grasp of semantics.

But the WCS, which consists of 273 problem sets hand-written by experts, is susceptible to built-in biases that paint an inaccurate picture of a model’s performance. Because individuals have a natural tendency to repeat their problem-crafting strategies, they also have a tendency to introduce annotation artifacts — unintentional patterns in the data — that reveal information about the target label that can skew the results of the test.

For example, if a pair of sentences asks the model to determine whether a pronoun is referring to a lion or a zebra based on the use of the trigger words “predator” or “meaty,” the model will note that the word “predator” is often associated with the word “lion.” In a similar fashion, a reference to a tree falling on a roof will lead the model to correctly associate the trigger word “repair” with “roof,” because while there are very few instances of trees being repaired, it is quite common to repair a roof. By choosing the correct answers to these questions, the model is not indicating an ability to reason about each pair of sentences. Rather, the model is making its selections based on a pattern of word associations it has detected across the dataset that just happens to correspond with the right answers.

“Today’s neural models are adept at exploiting patterns of language and other unintentional biases that can creep into these datasets. This enables them to give the correct answer to a problem, but for incorrect reasons,” explained Choi, who splits her time between the Allen School’s Natural Language Processing group and AI2. “This compromises the usefulness of the test, because the results are not an accurate reflection of the model’s ability. To more accurately assess the state of natural language understanding, we came up with a new solution for systematically reducing these biases.”

That solution enabled the team to produce WinoGrande, a dataset comprising 44,000 sentence pairs that follow a similar format to that of the original WSC. One of the shortcomings of the WSC was its relatively small size owing to the need to hand-write the questions. Choi and her colleagues got around that difficulty by crowdsourcing question material using Amazon Mechanical Turk, following a carefully designed procedure to ensure that problem sets avoided ambiguity or word association and covered a variety of topics. To eliminate any unintentional biases embedded in the dataset at scale, the researchers developed a new algorithm, dubbed AFLite, that employs state-of-the-art contextual representation of words to identify and eliminate annotation artifacts. AFLite is modeled on an existing adversarial filtering (AF) algorithm but is more lightweight, thus requiring fewer computational resources.

The team hoped that their new, improved benchmark would provide a clearer picture of just how far machine understanding has progressed. As it turns out, the answer is “not as far as we thought.”

“Human performance on problem sets like the WSC and WinoGrande surpass 90% correctness,” noted Choi. “State-of-the-art models were found to be approaching human-like levels of accuracy on the WSC. But when we tested those same models using WinoGrande, their performance dropped to between 59% and 79%.

“Our results suggest that common sense is not yet common when it comes to machine understanding,” she continued. “Our hope is that this sparks a conversation about how we approach this core research problem and how we design the benchmarks for assessing future progress in AI.”

Read the research paper here, and a related article in MIT Technology Review here.

Congratulations to Yejin and the team at AI2!