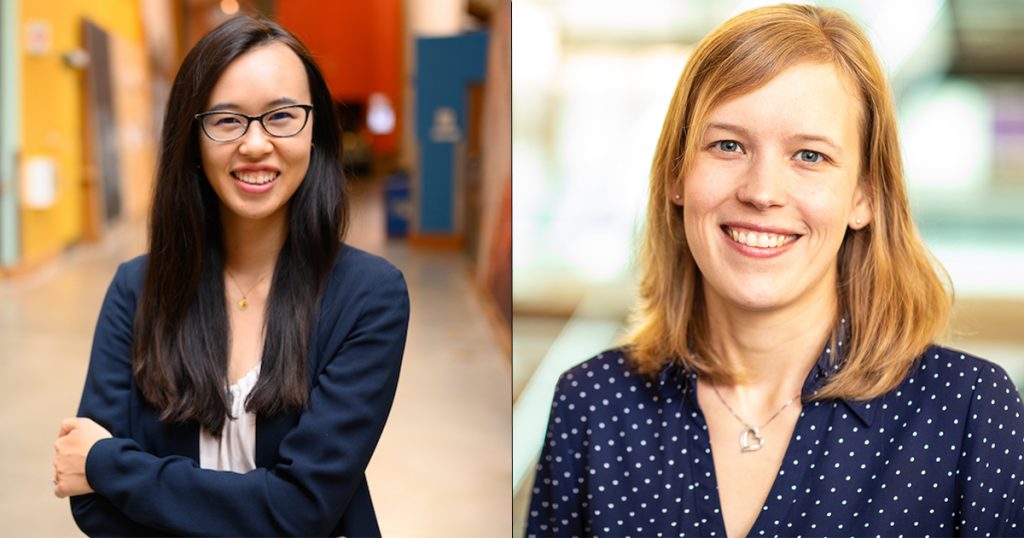

The National Science Foundation (NSF) has selected Allen School professors Amy Zhang, who directs the Social Futures Lab, and Franziska Roesner, who co-directs the Security and Privacy Research Lab, to receive Convergence Accelerator funding for their work with collaborators at the University of Washington and the grassroots journalism organization Hacks/Hackers on tools to detect and help stop misinformation online. The NSF’s Convergence Accelerator program is unique in that its structure offers researchers the opportunity to accelerate their work over the course of a year to find tangible solutions. The curriculum is designed to strengthen each team’s convergence approach and further develop their solution to move on to a second phase with the potential for additional funding.

In their proposal, “Analysis and Response for Trust Tool (ARTT): Expert-Informed resources for Individuals and Online Communities to Address Vaccine Hesitancy and Misinformation,” Zhang, Roesner, Human Centered Design & Engineering professor and Allen School adjunct professor Kate Starbird, Information School professor and director of the Center for an Informed Public Jevin West, and internet and Hacks/Hackers researcher at large Connie Moon Sehat, who serves as principal investigator on the project, aim to develop a software tool — ARTT — that helps people identify and prevent misinformation. This currently happens on a smaller scale by individuals and community moderators with few resources or expert guidance on combating false information. The team, made up of experts in fields such as computer science, social science, media literacy, conflict resolution and psychology, will develop a software program that helps moderators analyze information online and present practical information that builds trust.

“In our previous research, we learned that rather than platform interventions like ‘fake news’ labels, people often learn that something they see or post on social media is false or untrustworthy from comment threads or other community members,” said Roesner, who serves as co-principal investigator on the ARTT project alongside Zhang. “With the ARTT research, we are hoping to support these kinds of interactions in productive and respectful ways.”

While ARTT will help prevent the spread of any misinformation, the team’s focus right now is on combating false information on vaccines — vaccine hesitancy has been identified by the World Health Organization as one of the top 10 threats to global health.

In addition to her participation in the ARTT enterprise, Zhang has another Convergence Accelerator project focused on creating a “golden set” of guidelines to help prevent the spread of false information. That proposal, “Misinformation Judgments with Public Legitimacy,” aims to use public juries to render judgments on socially contested issues. The jurors will continue to build these choices to create a “golden set” that social media platforms can use to evaluate information posted on social media. Besides Zhang, the project team includes the University of Michigan’s Paul Resnick, associate dean for research and faculty affairs and professor at the School of Information, and David Jurgens, professor at the Information School and in the Department of Electrical Engineering & Computer Science, and the Massachusetts Institute of Technology’s David Rand, professor of management science and brain and cognitive sciences and Adam Berinsky, professor of political science.

Online platforms have been increasingly called on to reduce the spread of false information. There is little agreement on what process should be used to do so, and many social media sites are not fully transparent about their policies and procedures when it comes to combating misinformation. Zhang’s group will develop a forecasting service to be used as external auditing for platforms to reduce false claims online. The “golden sets” created from the jury’s work will serve as training data to improve the forecasting service over time. Platforms that use this service will also be more transparent about their judgments regarding false information posted on their platform.

“The goal of this project is to determine a process for collecting judgments on content moderation cases related to misinformation that has broad public legitimacy,” Zhang said. “Once we’ve established such a process, we aim to implement it and gather judgments for a large set of cases. These judgments can be used to train automated approaches that can be used to audit the performance of platforms.”

Participation in the Convergence Accelerator program includes a $749,000 award for each team to develop their work. Learn more about the latest round awards here and read about all of the UW teams that earned a Convergence Accelerator award here.