New approaches to finetuning large language models that decrease computational burden while enhancing performance. A robotic arm that safely delivers a forkful of food to someone’s mouth. A system that combines wireless earbuds and algorithms into a low-cost hearing screening tool.

These are just a sample of the nearly 60 projects that were on display during the Allen School’s Research Showcase and Open House at the University of Washington last week, capping off a day-long celebration of computing innovations that are advancing the field and addressing societal challenges. Nearly 300 Industry Affiliate partners, alumni and friends participated in the 2023 event, which included sessions devoted to computer science ethics, intelligent transportation, computing for sustainability, computing for health, natural language processing and more.

Everybody’s talking about LLMs

Attendees got the chance to sink their teeth into some of the latest advances in natural language processing during a luncheon keynote by Allen School professor Hannaneh Hajishirzi exploring the science behind large language models and the development of models to serve science.

“We have witnessed great progress in large language models over the past few years. These models create extremely fluent text — conversation-like text — and also code,” said Hajishirzi, who holds the Torode Family Professorship at the Allen School and is also senior director of AllenNLP at the Allen Institute for AI. ”Now they are being deployed in a diverse range of applications. And everybody these days is talking about their impact on society, their risks, their economic impacts, and so on.”

Those impacts and risks leave plenty of open questions for AI researchers to resolve, as LLMs continue to be computationally expensive, error-prone and difficult to maintain. They are also largely being developed by private companies.

“All of these models are proprietary,” Hajishirzi noted. “So it’s very hard for AI researchers to actually understand and analyze what is going on.”

Hajishirzi and her colleagues favor a more open approach to building models that are transparent, reproducible and accessible. But there are many definitions of “open.” Even if the company opens up the API or makes a model available for research purposes, restrictions remain — such as the inability to access the data on which the models are trained.

As an alternative, Hajishirzi and her collaborators created OLMo, short for Open Language Model. OLMo is a full language modeling pipeline in which “everything is open,” from pre-training to reinforcement learning through human feedback (and all stages in between). By being so transparent and engaging the broader AI research community, Hajishirzi hopes the project will help narrow the gap between the public and private sectors. Their good intentions are not limited to advancing AI research, either; the team is also developing the capability to advance scientific discovery in other disciplines by fine tuning and training on their data.

To that end, Hajishirzi and her colleagues developed a large-scale, high-quality pretraining dataset cleverly named Dolma, short for “data to feed OLMo’s appetite.” The dataset, which comprises 3.1 trillion tokens in total, is significantly larger than previous open datasets. A significant portion — 2.6 trillion tokens — is web data covering diverse domains, from Reddit to scientific data, filtered to eliminate toxicity and personally identifying information as well as duplication. Dolma has been downloaded 320,000 times in just the past month.

But how does this approach compare to that of state-of-the-art closed models? When it comes to the latter, “there are too many question marks,” Hajishirzi noted, pointing out that we don’t have sufficient information about the datasets — including not knowing how many tokens the models are trained on.

That is not a problem when it comes to the work of Hajishirzi and her collaborators — including the development of novel approaches to instruction tuning to enable pretrained models to generalize to new applications. Hajishirzi described the result of those efforts, a project called Tülu, as “the largest, best and open instruction tuned model at this point.” And the team continues to make improvements; for example, they have added the ability to extract information from scientific papers and to perform parameter-efficient finetuning for use in low-resource contexts. The researchers have also developed an effective evaluation framework that includes in-loop evaluation of the training at every step of the process.

Such progress does not come without a cost, however.

“This project required a lot of compute. It still requires a lot of GPUs and compute,” Hajishirzi observed, citing the need to improve computational efficiency so that more communities can make use of these models.

How low can you go?

As it happens, multiple Allen School researchers are attempting to answer this question — and answer Hajishirzi’s call — by exploring techniques for making LLMs more efficient. Teams shared their results from projects addressing this and a range of other challenges during the open house and poster session.

The event culminated with Scott Jacobson, managing director at Madrona Venture Group, announcing the recipients of the Madrona Prize, which highlights cutting-edge research at the Allen School with commercial potential. In his remarks, Jacobson highlighted the firm’s long standing partnership with the Allen School, which extends to supporting multiple startup companies based on student and faculty research that is helping to shape the future of the field.

”There’s so much great research here” said Jacobson. “Over the years, a number of themes that I think are now kind of commonplace in tech were really pioneered here. A lot of those themes you’ve seen in the poster session on Industry Affiliates day — cloud computing, edge computing, computer vision, a lot of applied machine learning and AI. And so it’s just really fun every year for us to get the opportunity to do this.”

Madrona Prize winner/ QLoRA: Efficient Finetuning of Quantized LLMs

Allen School Ph.D. student Tim Dettmers accepted the grand prize for QLoRA, a novel approach to finetuning pretrained models that significantly reduces the amount of GPU memory required — from over 780GB to less than 48GB — to finetune a 65B parameter model. With QLoRA, the largest publicly available models can be finetuned on a single professional GPU, and 33B models on a single consumer GPU, with no degradation in performance compared to a full finetuning baseline. The approach will help close the gap between large companies and smaller research teams, and could potentially enable finetuning on smartphones and in other low-resource contexts. The team behind QLoRA includes Allen School Ph.D. student Artidoro Pagnoni; alum Ari Holtzman (Ph.D., ‘23), incoming professor at the University of Chicago; and professor Luke Zettlemoyer, who is also a research manager at Meta.

Madrona Prize First Runner Up / Punica: Multi-Tenant LoRA Fine-tuned LLM Serving

Another team earned accolades for their work on Punica, a framework that makes low-rank adaptation of pre-trained models for domain-specific tasks more efficient by serving multiple LoRA models in a shared GPU cluster. Punica’s new CUDA kernel design allows for batching of GPU operations for different models while requiring a GPU to hold only a single copy of the underlying pre-trained model — significantly reducing the level of memory and computation required. The research team includes Allen School Ph.D. students Lequn Chen and Zihao Ye; Duke University Ph.D. student Yongji Wu; Allen School alum Danyang Zhuo (Ph.D., ‘19), now a professor at Duke; and Allen School professors Luis Ceze and Arvind Krishnamurthy.

Madrona Prize Second Runner Up / Wireless Earbuds for Low-cost Hearing Screening

Allen School researchers were recognized for their work with clinicians on OAEbuds, which combines low-cost wireless acoustic hardware and sensing algorithms to reliably detect otoacoustic emissions generated by the ear’s cochlea. The system offers an alternative to conventional — and expensive — hardware to make hearing screening more accessible in low- and middle-income countries. Allen School Ph.D. student Antonio Glenn accepted on behalf of the team, which also includes Allen School alum Justin Chan (Ph.D., ‘22), incoming professor at Carnegie Mellon University; professors Shyam Gollakota and Shwetak Patel, who has a joint appointment in the UW Department of Electrical & Computer Engineering; ECE Ph.D. student Malek Itani; Drs. Randall Bly and Emily Gallagher of UW Medicine and Seattle Children’s; and audiologist Lisa Mancl, affiliate instructor in the UW Department of Speech & Hearing Sciences.

People’s Choice Award / ADA, the Assistive Dexterous Arm: A Deployment-Ready Robot-Assisted Feeding System

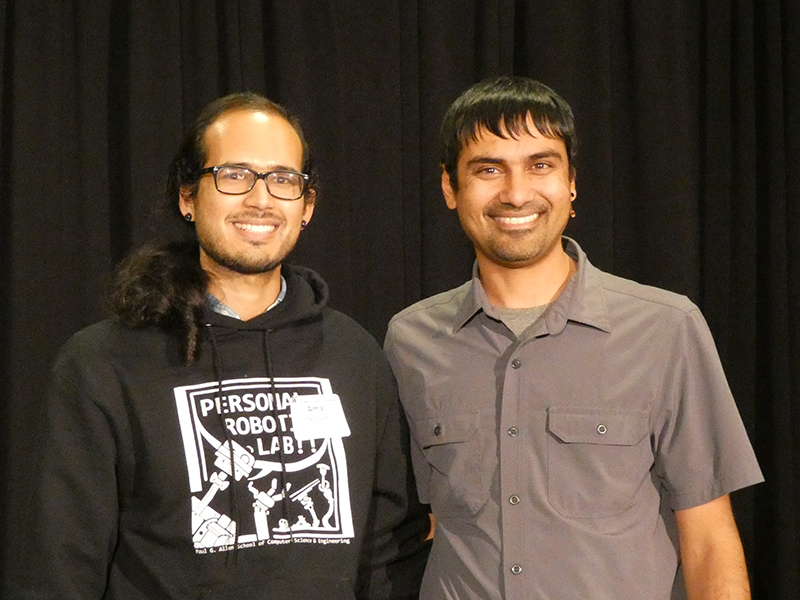

Also affectionately referred to as “the food thing” to attendees who overwhelmingly voted it their favorite demo of the night, ADA aims to address a variety of technical and human challenges associated with robot-assisted feeding to improve quality of life for people with mobility limitations. The researchers invited visitors to try the system for themselves by using a smartphone app to direct ADA in feeding them forkfuls of fruit. Ph.D. student Amal Nanavati accepted the award from professor Shwetak Patel, the Allen School’s associate director for development and entrepreneurship. The team also includes Ph.D. students Ethan Gordon and Bernie Hao Zhu; undergraduate researcher Atharva Kashyap; Haya Bolotski, a participant in the Personal Robotics Lab’s youth research program; Allen School alum Raida Karim (B.S., ‘22); postdoc Taylor Kessler Faulkner; and professor Siddhartha Srinivasa. Read more about the robot-assisted feeding project in a recent UW News Q&A with the ADA team here.

For more about the Allen School’s 2023 Research Showcase and Open House, read GeekWire’s coverage here and the Madrona Prize announcement here.