There has been a lot of chatter lately, online and in various media outlets, about the supposed dwindling prospects for new computer science graduates in the artificial intelligence era. Recent layoffs in the technology sector have students, parents and educators worried that a degree in computing, once seen as a sure path to a fulfilling career, is no longer a reliable bet.

“The alarmist doom and gloom prevalent in the news is not consistent with the experiences of the vast majority of our graduates,” said Magdalena Balazinska, professor and director of the Allen School. “The industry will continue to need smart, creative software engineers who understand how to build and harness the latest tools — including AI. And the fact remains that a computer science degree is great preparation for a broad range of fields within and beyond technology, including the natural sciences, finance, medicine and law.”

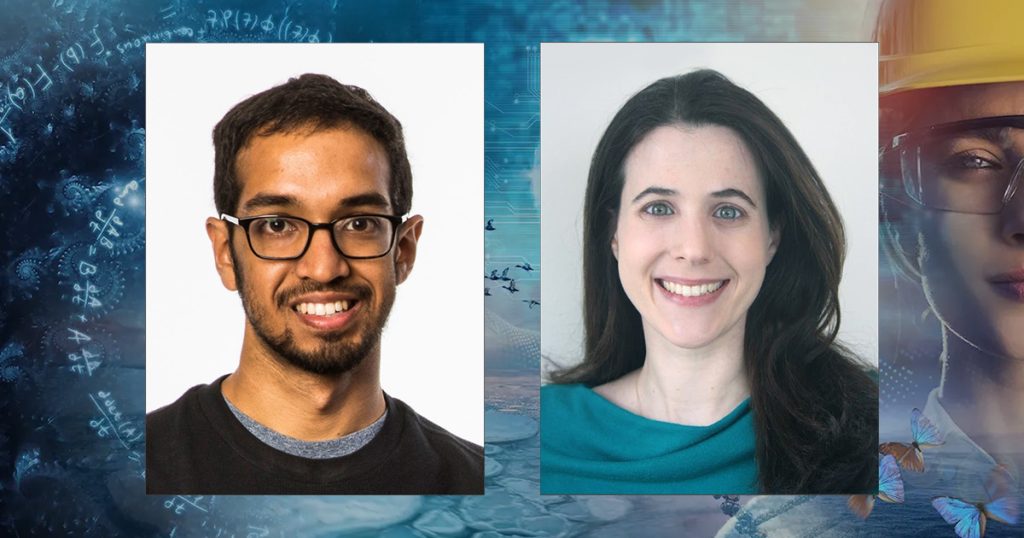

In our own version of MythBusters, we asked Balazinska and professor Dan Grossman, vice director of the Allen School, to examine the myths and realities surrounding AI and the prospects for current and future Allen School majors. Their answers indicate that the rumored demise of software engineering as a career path has been greatly exaggerated — and that no matter what path computer science majors choose after graduation, they can use their education to change the world.

Also be sure to check out our fact sheet, Computer Science Careers and AI: Myth vs. Reality.

Let’s start with the question on everyone’s mind: What’s the job market like for computer science graduates these days?

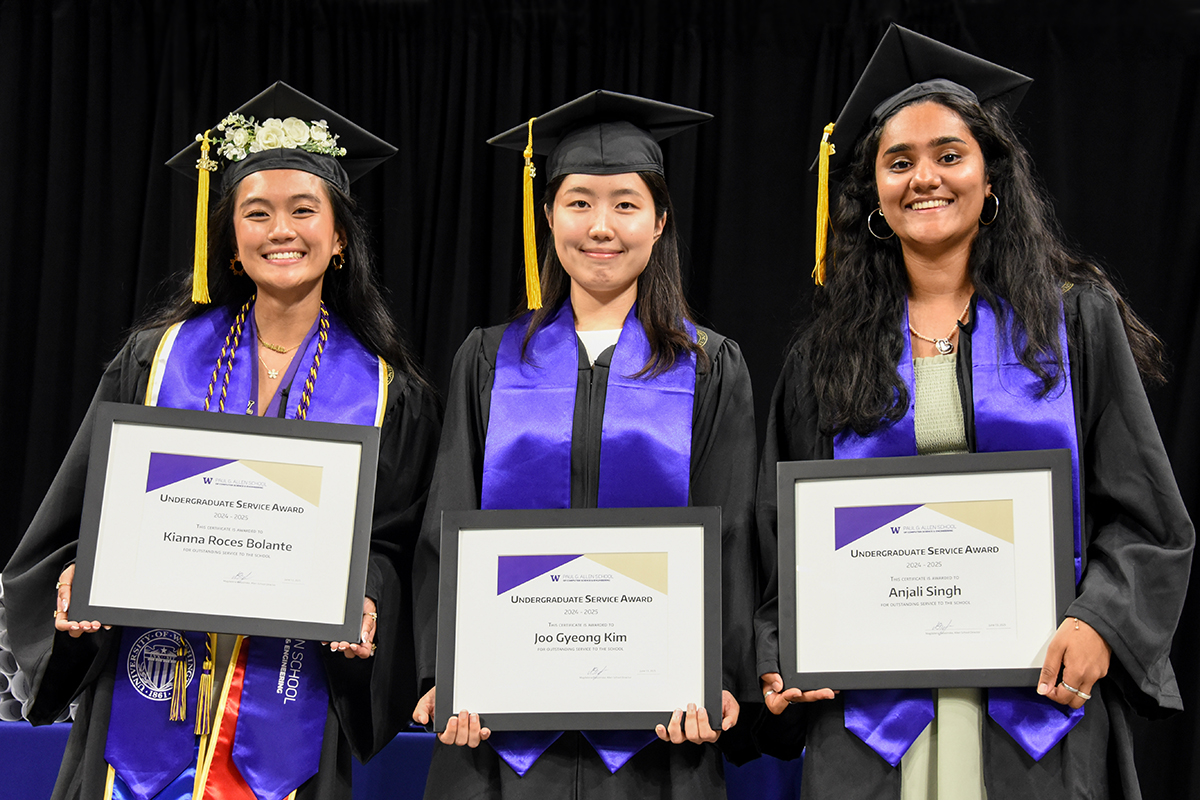

Dan Grossman: Individuals’ mileage may vary depending on a range of factors, but what’s being reported in some media outlets doesn’t reflect what we’re seeing here at the University of Washington. More than 120 different companies hired this year’s Allen School graduates into software engineering roles. Amazon alone hired more than 100 graduates from the Allen School’s 2024-25 class. Google and Meta didn’t hire at that scale, but still more than last year — they each hired 20 graduates this year. Microsoft hired more than two dozen graduates from this latest class. I expect these numbers to grow as we hear from more graduates.

So, while the job market is tighter now than it was a few years ago, the sky is not falling. It’s important to remember that the Allen School is one of the top computer science programs in the nation, with a cutting-edge curriculum that evolves alongside the industry while remaining grounded in the fundamentals of our field. Our graduates are highly sought after, so their experience in the job market doesn’t necessarily reflect the experience of others. That has always been the case, even before this latest handwringing over AI. A B.S. in CS is not a uniform credential. The Allen School has always produced highly competitive graduates.

Magdalena Balazinska: In addition to those who found employment after graduation, more than 100 of our recent graduates opted to continue their education by enrolling in a master’s or Ph.D. program, which, of course, also makes us immensely proud!

How is AI impacting software engineering jobs?

MB: There are two factors: (1) AI’s impact on the work of a software engineer, and (2) AI’s impact on the job market for software engineers. Regarding the latter, it’s not so much that AI is taking the jobs, but that companies are having to devote tremendous resources to the infrastructure behind AI, which is very expensive. Also, many companies over-hired during COVID, and now they’re doing a course-correction for the AI era. I look at this as more of a reset. There’s no question that AI is affecting many areas of computing, just as it’s affecting just about every other sector of the economy. Companies will continue to invest in the people who know how to build and leverage these and other tools.

To my first point: With AI, we should expect the work of a software engineer to change, but to change in a really exciting way! The task of coding, or the translation of a very precise design into software instructions, can largely be handled by AI. But that’s not the most exciting or challenging part of software engineering. Understanding the requirements, figuring out an appropriate design, and articulating it as a precise specification are the hard parts. Going forward, software engineers will spend more time imagining what systems to build and how to organize the implementation of those systems, and then let AI handle many of the details of converting those ideas into code.

DG: One of our former faculty colleagues, Oren Etzioni, said, “You won’t be replaced by an AI system, but you might be replaced by a person who uses AI better than you.” I think that’s the direction we’re headed. Not AI as a replacement for people, but as a differentiator. Here at the Allen School, one of our goals is to enable students to differentiate themselves in this rapidly evolving landscape. For example, we are introducing a course on AI-aided software development, which will teach students how to effectively harness these tools.

How has AI affected student interest in the Allen School?

DG: Student interest remains strong — we received roughly 7,000 applications for Fall 2025 first-year admission.

MB: That may sound like a daunting number. However, we were able to offer admission to 37% of the Washington high school students who applied. That’s not as high as we would like it to be, but it’s far higher than public perception. We achieve this by heavily favoring applicants from Washington. For Fall 2025, we offered admission to only 4% of applicants from outside Washington.

If AI can write code, why should students major in computer science?

MB: Because computer science is so much more than coding! Creating a new system or application, perhaps a system to help the elderly take care of their daily tasks and manage their paperwork or a new approach for doctors to perform long-distance tele-operations, isn’t just a matter of “writing code.” A software engineer begins by clearly understanding the requirements — what the system needs to provide. Then the software engineer will decompose the problem into pieces, understand how those pieces will fit together, and anticipate failures. What happens if there is a power or network failure, or someone tries to hack the system? This gets progressively more challenging with the complexity and scale of systems that software engineers build, typically on teams with many people working together. Coding is the relatively easier part.

DG: In that spirit, here in the Allen School, we do teach students how to code, but as a component of how to envision, design and build systems and applications that solve complex problems and touch people’s lives. The principles, precision, and reasoning gained from reading and writing code is a necessary foundation that serves our students very well — including graduates who now use AI in industry. It is the software engineers with the deepest knowledge who will be most effective at using AI to write their code, because they will know how and where AI can go wrong and how to steer it toward producing a correct output.

MB: Engineers have always used tools, and their tools have always advanced with time and opened the door to innovation. Thanks to developments like modern coding libraries and languages, online repositories like StackOverflow, GitHub, automated testing, cloud computing, and more, software engineers today are far more efficient and can develop applications more quickly than ever before. And this was before AI for coding had really taken off. And yet, there are more software engineers doing more interesting and important things than ever before!

How does the Allen School prepare students for a workplace — and a world — being transformed by AI?

MB: As a leader in AI research, the Allen School is ideally positioned to help students learn how to use AI, how to build AI, and how to move the field of AI forward to benefit humanity. We give students multiple opportunities to explore AI topics and tools as part of our curriculum. Dan mentioned our AI-assisted software development course, and many of our other courses allow for using AI assistance in well-prescribed ways. This enables students to focus on core course concepts, generate more complex projects, and so on. Gaining experience with any AI tool can give a sense of what the technology can help with — along with its limitations. That said, we will continue in some courses to expect students to build, design, test, and document software without AI assistance.

DG: Our courses sometimes use the same cutting-edge tools used in industry, and other times will provide a simpler setting for pedagogical purposes. Software engineering tools change rapidly, so we tend not to get into the weeds on any one particular tool but give students the confidence to pick up future tools. Importantly, we don’t just teach students how to build and use AI. We also help them to think critically about the ethics and societal impacts of these technologies, such as their potential to reinforce bias or be used as a surveillance tool, and ways to mitigate those impacts.

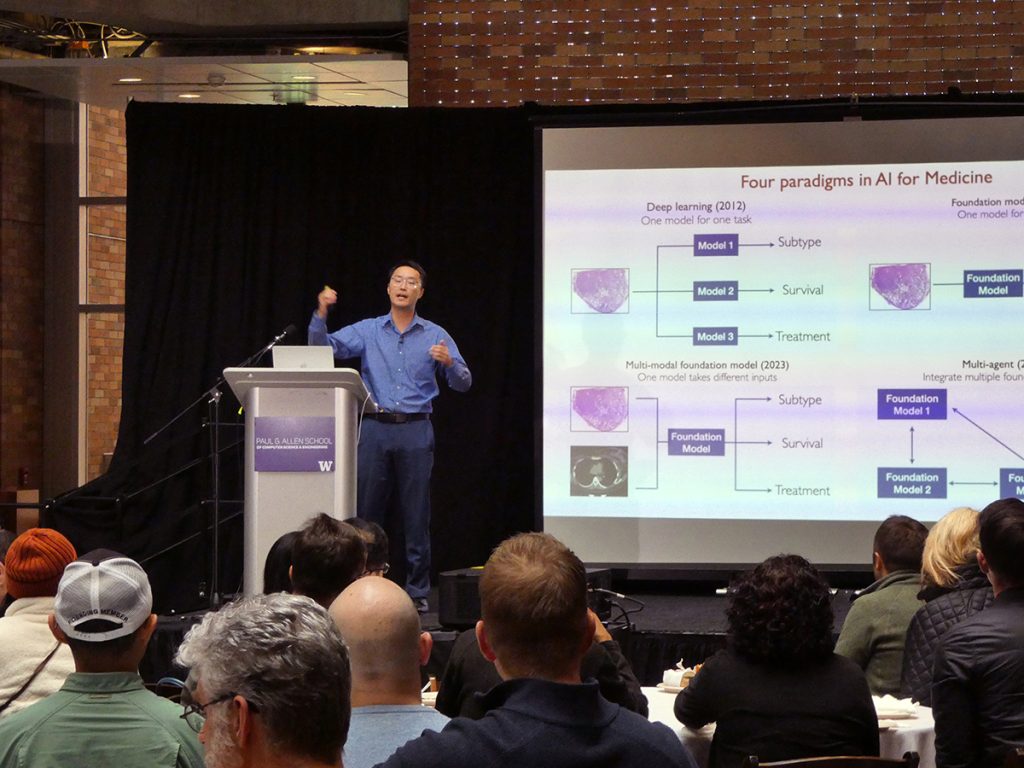

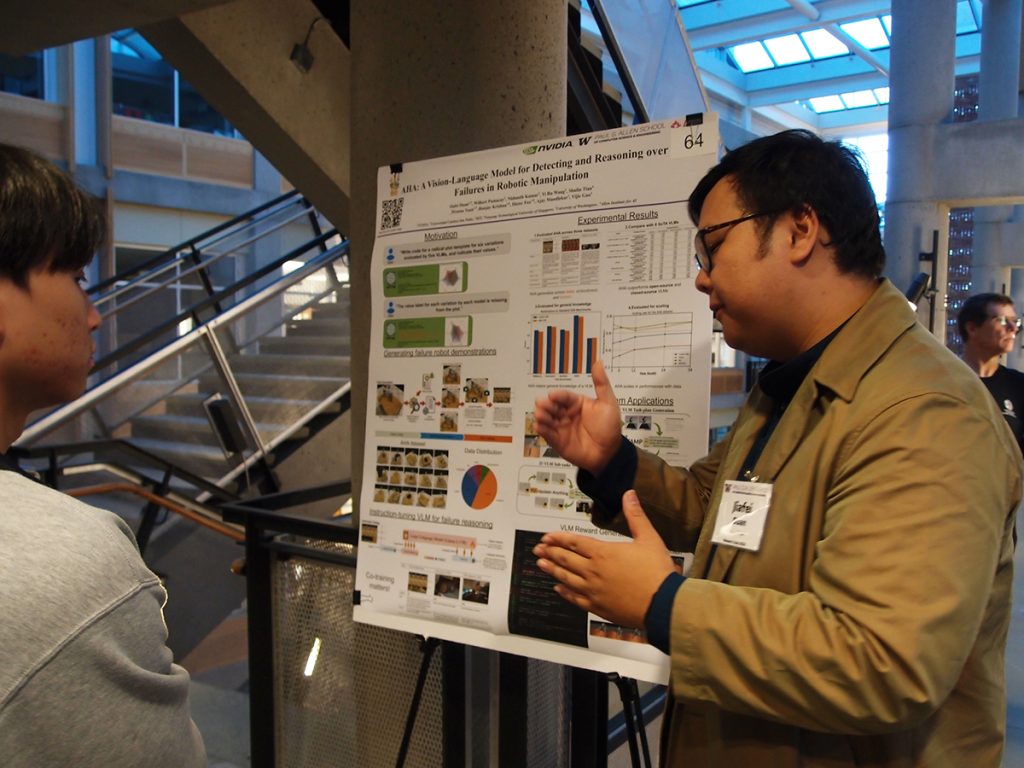

MB: Another advantage we have at the Allen School is that we are a leading research institution, and our faculty are among the foremost experts in the field. This gives us the ability to incorporate new concepts and techniques into our coursework quickly. We also have a program devoted to supporting undergraduates in engaging in hands-on research in our labs alongside those very same faculty and our amazing graduate students and postdocs. Many students choose to get involved in research during their undergraduate studies.

What if a student is interested in computing, but not AI?

DG: Great! There are many open challenges across computing, from systems development, to human-facing interactive software design, to hardware design, to data management, and many others. Even if you are not using or developing AI itself, building systems that can run AI efficiently is driving a lot of exciting work in the field these days. While the big breakthroughs that have been driving rapid change over the last few years are AI-centered, computing remains a broad field.

MB: A student can major in computer science and follow their passion wherever it takes them. A subset of students will choose to study AI and build the next AI technologies, but the vast majority will use AI as a tool while building systems for medicine, education, transportation, the environment, and other important purposes. Or they will build back-end infrastructure at global companies like Google, Amazon, or Microsoft, or tackle other challenges like those that Dan mentioned. The more we advance computing, the more we open new opportunities. I think that’s why the number of software engineers just keeps growing. There is always more to do. The job is never done.

What is your advice to current and aspiring computer science majors who worry about their career prospects with the rise of AI?

MB: First, if you think you want to be a computer scientist or a computer engineer, pursue that! If you choose a major that you are excited about, you will not mind spending hours deepening your knowledge and sharpening your skills, which will help you to become an expert and to enjoy your chosen profession even more. My advice to every student is to take a broad range of challenging courses. Learn how to use the current tools, with the understanding that the tools you use today will not be the ones you use tomorrow. This field moves fast, which is what makes it exciting.

When it’s time to start your job search, whether for an internship or a full-time job, apply broadly. Apply to large companies, small companies, companies in various sectors, non-profits, and so on. Many organizations need software engineers! And not all interesting technical jobs that use a computing degree have the title of software engineer. Pick the position where you will learn the most. It’s important to optimize for learning and for growth, especially early on in one’s career.

DG: I would also remind students that a UW education is not about vocational training; our goal is that students graduate with the knowledge and skills to succeed in their chosen career, yes, but also to be engaged citizens of the world. While you’re here, make the most of your education — take a range of challenging courses and put in the time to learn the material. After all, it is a multi-year investment on your part, and the faculty have invested a lot of time and effort into creating a challenging, coherent curriculum for you. Take the hardest classes that you think are also the most exciting ones, and then focus on learning as much as you can.

Any final thoughts?

DG: Don’t choose a major solely because it’s popular. Choose a major that you’re passionate about. If that’s computer science or computer engineering, we’d love to see you at the Allen School. If it’s something else, we’d still love to see you in some of our classes.

MB: Everyone can benefit from learning at least a little computer science, especially now in the AI era!

Download the fact sheet: Computer Science Careers and AI: Myth vs. Reality