What if you could engage with the world — go to class, do your job, meet up with friends, get a workout in — without leaving the comfort of your bedroom thanks to mixed-reality technology?

What if you could customize your avatar in many ways, but you couldn’t make it reflect your true racial or cultural identity? What if the best way to get a high-paying job was based on your access to this technology, only you are blind and the technology was not designed for you?

And what if notions of equity and justice manufactured in that virtual world — at least, for some users — still don’t carry over into the real one?

These and many other questions come to mind as one reads “Our Reality,” a new science-fiction novella authored by Tadayoshi (Yoshi) Kohno, a professor in the University of Washington’s Paul G. Allen School of Computer Science & Engineering. Kohno, who co-directs the UW’s Security and Privacy Research Lab and Tech Policy Lab, has long regarded science fiction as a powerful means through which to critically examine the relationship between technology and society. He even dealt previously with the issue in a short piece he contributed to the Tech Policy Lab’s recent anthology, “Telling Stories,” which explores culturally-responsive artificial intelligence (AI).

“Our Reality,” so named for the mixed-reality technology he imagines taking hold in the post-pandemic world circa 2034, is Kohno’s first foray into long-form fiction. The Allen School recently sat down with Kohno with the help of another virtual technology — Zoom — to discuss his inspiration for the story, his personal as well as professional journey to examining matters of racism, equity and justice, and how technology meant to help may also do harm.

First of all, congratulations on the publication of your first novella! As a professor of computer science and a well-known cybersecurity researcher, did you ever expect to be writing a work of science fiction?

Yoshi Kohno: I’ve always been interested in science fiction, ever since I was a kid. I’ve carried that fascination into my career as a computer science educator and researcher. Around 10 years ago, I published a paper on science fiction prototyping and computer security education that talked about my experiences asking students in my undergraduate computer security course to write science fiction as part of the syllabus. Writing science fiction means creating a fictional world and then putting technology inside that world. Doing so forces the writer — the students, in my course — to explore the relationship between society and technology. It has been a dream of mine to write science fiction of my own. In fact, when conducting my research — on automotive security, or cell phone surveillance, or anything else — I have often discussed with my students and colleagues how our results would make good plot elements for a science fiction story! The teenage me would be excited that I finally did it.

What was the inspiration for “Our Reality,” and what made you decide now was the right time to realize that childhood dream?

YK: Recently, many people experienced a significant awakening to the harms that technology can bring and to the injustices in society. We, as a society and as computer scientists, have a responsibility to address these injustices. I have written a number of scientific, scholarly papers. But I realized that some of the most important works in history are fiction. Think about the book “1984,” for example, and how often people quote from it. Now, I know my book will not be nearly as transformative as “1984,” but the impact of that book and others inspired me.

I was particularly disturbed by how racism can manifest in technologies. As an educator, I believe that it is our responsibility to help students understand how to create technologies that are just, and certainly that are not racist. I wrote my story with the hope that it would inform the reader’s understanding of racism and racism in technology. At the same time, I also want to explicitly acknowledge that there are already amazing books on the topic of racism and technology. Consider, for example, Dr. Ruha Benjamin’s book “Race After Technology,” or Dr. Safiya Umoja Noble’s book “Algorithms of Oppression.” I hope that readers committed to addressing injustices in society and in technology read these books, as well as the other books I cite in the “Suggested Readings” section of my novella.

In addition to racism in technology, my story also tries to surface a number of other important issues. I sometimes intentionally added flaws to the technologies featured in “Our Reality” — flaws that can serve as starting points for conversations.

How did your role as the Allen School’s Associate Director for Diversity, Equity, Inclusion and Access inform your approach to the story?

YK: I view diversity, equity, inclusion, and access as important to me as an individual, important for our school, and important for society. I’ve thought about the connection between society and technology throughout my career. As a security researcher and educator, I have to think about the harms that technologies could bring. But when I took on the role of Associate Director, I realized that I had a lot more learning to do. I devoured as many resources as I could, I talked with many other people with expertise far greater than my own, I read many books, I enrolled in the Cultural Competence in Computing (3C) Fellows Program, and so on. This is one of the things that we as a computer science community need to always be doing. We should always be open to learning more, to questioning our assumptions, and to realizing that we are not the experts.

One of the things that I’ve always cared about as an educator was helping students understand not only the relationship between society and technology, but to recognize how technologies can do harm. I’m motivated to get people thinking about these issues and proactively working to mitigate those harmful impacts. If people are not already thinking about the injustices that technologies can create, then I hope that “Our Reality” can help them start.

One of the main characters in “Our Reality,” Emma, is a teenage Black girl. How did you approach writing a character whose identity and experiences would be so different from your own?

YK: Your question is very good. I have several answers that I want to give. First, this question connects to one of the teaching goals of “Our Reality” and, in particular, to Question 6 in the “Questions for Readers” section of the novella. Essentially, how should those who design technologies approach their work when the users or affected stakeholders might have identities very different from their own? And how do they ensure that they don’t rely on stereotypes or somehow create an injustice for those users or affected stakeholders? Similarly, in writing “Our Reality,” and with my goal of contributing to a discussion about racism in technology, I found myself in the position of centering Emma, a teenage girl who is Black. This was a huge responsibility, and I knew that I needed to approach this responsibility respectfully and mindfully. My own identity is that of a cisgender man who is Japanese and white.

The first thing I did towards writing Emma was to buy the book “Writing the Other“ by Nisi Shawl and Cynthia Ward. It is an amazing book, and I’d recommend it for anyone else who wishes to either write stories that include characters with identities other than their own. I read their book last summer. I then discovered that Shawl was teaching a year-long course in science fiction writing through the Hugo House here in Seattle, so I enrolled. That taught me a significant amount about writing science fiction and writing about diverse characters.

I should acknowledge that while I tried the best I could, I might have made mistakes. While I have written many versions of this story, and while I’ve received amazingly useful feedback along the way, any mistakes that remain are mine and mine alone.

In your story, Our Reality is also the name of a technology that allows users to experience a virtual, mixed-reality world. One of the other themes that stood out is who has access to technologies like Our Reality — the name implies a shared reality, but it’s only really shared by those who can afford it. There are the “haves” like Emma and her family, and the “have nots” like Liam and his family. How worried are you that unequal access to technology will exacerbate societal divides?

YK: I’m quite worried that technology will exacerbate inequity along many dimensions. In the context of mixed-reality technology like Our Reality, I think there are three reasons that a group of people might not access it. One is financial; people may not be able to afford the mixed-reality Goggles, the subscription, the in-app purchases, and so on. They either can’t access it at all or will have unequal access to its features, like Liam discovered when he tried to customize his avatar beyond the basic free settings. The second reason is because the technology was not designed for them. I alluded to this in the story when I brought up Liam’s classmate Mathias, who is blind. Finally, some people will elect not to access the technology for ideological reasons. When I think about the future of mixed-reality technologies, like Our Reality, I worry that society will further fracture into different groups, the “haves” or “designed fors” and the “have nots” or “not designed fors.”

Emma’s mother is a Black woman who holds a leadership position in the company that makes Our Reality, but her influence is still limited. For example, Emma objects to the company’s decision to make avatars generic and “raceless,” which means she can’t fully be herself in the virtual world. What did you hope people would take away from that aspect of the story?

YK: First, this is an example of one of the faults that I intentionally included in the technologies in “Our Reality.” I also want to point the reader to the companion document that I prepared, which describes in more detail some of the educational content that I tried to include in Our Reality. Your question connects to so many important topics, such as the notion of “the unmarked state” — the default persona that one envisions if they are not provided with additional information — as well as colorblind racism. This also connects to something that Dr. Noble discusses in the book “Algorithms of Oppression” and which I tried to surface in “Our Reality” — that not only do we need to increase the diversity within the field, but we need to overcome systemic issues that stand in the way of fully considering the needs of all people, and systemic inequities, in the design of technologies.

Stepping back, I am hoping that readers start to think about some of these issues as they read “Our Reality.” I hope that they realize that the situation described in “Our Reality” is unjust and inequitable. I hope they read the companion document, to understand the educational content that I incorporated into “Our Reality.” And then I hope that readers are inspired to read more authoritative works, like “Algorithms of Oppression” and the other books that I reference in the novella and in the companion document.

You and professor Franziska Roesner, also in the Allen School, have done some very interesting research with your students in the UW Security and Privacy Research Lab. Your novella incorporates several references to issues raised in that research, such as tracking people via online advertising and how to safeguard users of mixed-reality technologies from undesirable or dangerous content. It almost feels uncomfortably close to your version of 2034 already. So how can we as a society, along with our regulatory frameworks, catch up and keep up with the pace of innovation?

YK: Rather than having society and our regulatory frameworks catch up to the pace of innovation, we should consider slowing the pace of innovation. Often there is a tendency to think that technology will solve our problems; if we just build the next technology, things will be great, or so it seems like people often think. Instead of perpetuating that mentality, maybe we should slow down and be more thoughtful about the long-term implications of technologies before we build them — and before we need to put any regulatory framework in place.

As part of changing the pace of innovation, we need to make sure that the innovators of technology understand the broader society and global context in which technologies exist. This is one of the reasons why I appreciate the Cultural Competence in Computing (3C) Fellows Program coming out of Duke so much, and why I encourage other educators to apply. That program was created by Dr. Nicki Washington, Dr. Shaundra B. Daily, and graduate assistant Cecilé Sadler at Duke University. The program’s goal is to help empower computer science educators, throughout the world, with the knowledge and skills necessary to help students understand the broader societal context in which technologies sit.

As an aside, one of the reasons that my colleagues Ryan Calo in the School of Law and Batya Friedman in the iSchool and I co-founded the Tech Policy Lab at the University of Washington is that we understood the need for policymakers and technologists to also come together and explore issues at the intersection between society, technology, and policy.

Speaking of understanding context, in the companion document to “Our Reality” you note “Computing systems can make the world a far better place for some, but a far worse one for others.” Can you elaborate?

YK: There are numerous examples of how technologies, when one looks at them from a 50,000 foot perspective, might seem to be beneficial to individuals or society. But when one looks more closely at the specific case of specific individuals, you find that they’re not providing a benefit; in fact, they have the potential to actively cause harm. Consider, for example, an app that helps a user find the location of their family or friends. Such an app might seem generally beneficial — it could help a parent or guardian find their child if they get separated at a park. But now consider situations of domestic abuse. Someone could use that same technology to track and harm their victim.

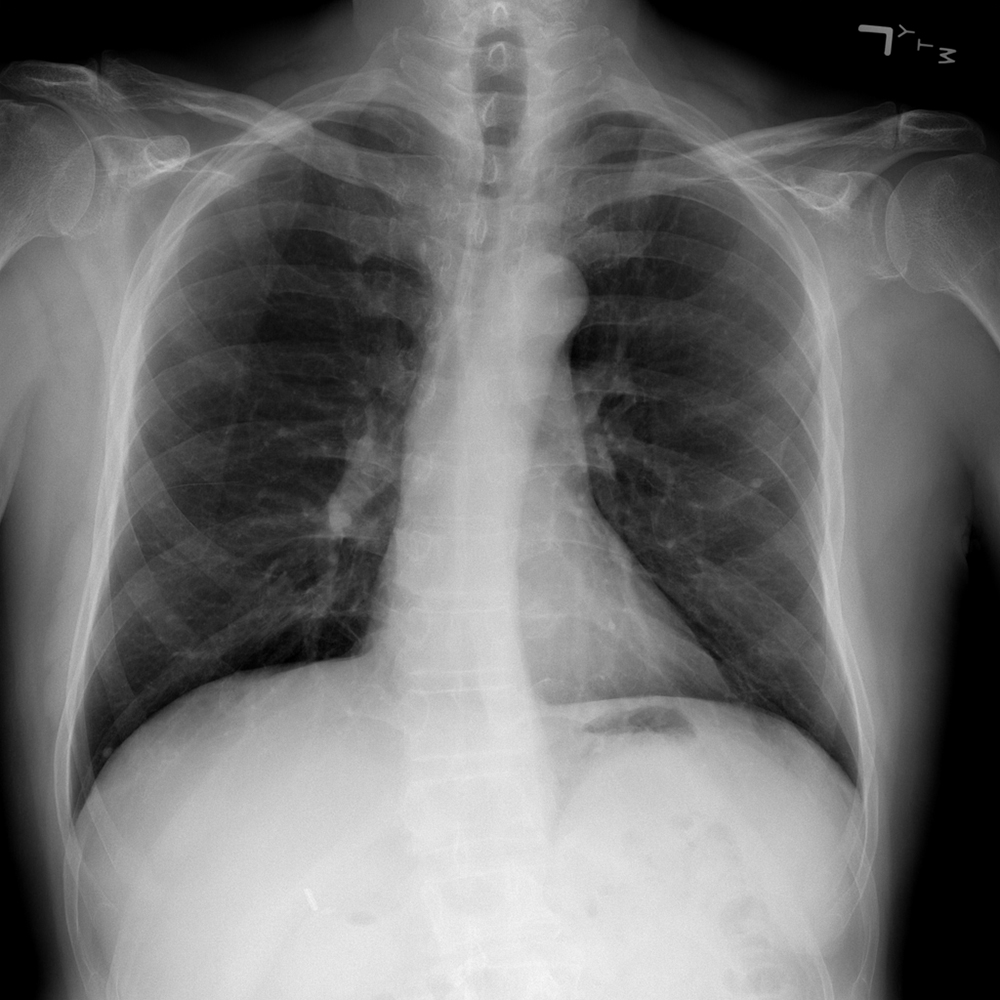

Another example, which I explored in “Our Reality” through Emma’s encounter with the police drones, is inequity across different races. Face detection and recognition systems are now widely understood to be inequitable because they have decreased accuracy with Black people compared to white people. This is incredibly inequitable and unjust. I encourage readers to learn more about the inequities with face detection and face recognition. One great place to start is the film “Coded Bias” directed and produced by Shalini Kantayya, which centers MIT Media Lab researcher Joy Buolamwini.

At one point, Emma admonishes her mother, a technologist, that she can’t solve everything with technology. How do we determine what is the best use of technology, and what is the responsibility of your colleagues who are the ones inventing it?

YK: I think that it is absolutely critical for those who are driving innovation to understand how the technology that they create sits within a broader society and interacts with people, many of whom are different from themselves. I referred earlier to this notion of a default persona, also called the “unmarked state.” Drawing from Nisi Shawl and Cynthia Ward’s book “Writing the Other,” this is more often than not someone who is white, male, heterosexual, single, young, and with no disabilities. Not only should one be thinking about how a technology would fit in the context of society, but also consider it in the context of the many people who do not identify with this default persona.

On top of that, when designing technologies for someone “not like me,” people need to be sure they are not invoking stereotypes or false assumptions about those who are not like themselves. There’s a book called “Design Justice” by Dr. Sasha Costanza-Chock about centering the communities for whom we are designing. As technologists, we ought to be working with those stakeholders to understand what technologies they need. And we shouldn’t presume that any specific technology is needed. It could be that a new technology is not needed.

Some aspects of Our Reality sound like fun — for example, when Emma and Liam played around with zero gravity in the science lab. If you had the opportunity and the means to use Our Reality, would you?

YK: I think it is an open research question about what augmented reality and mixed-reality technologies will be like in the next 15 years. I do think that technologies like Our Reality will exist in the not-too-distant future. But I hope that the people developing these technologies will have addressed the access questions and societal implications that I raised in the story. As written, I think I would enjoy aspects of the technology, but I would not feel comfortable using it if the equity issues surrounding Our Reality aren’t addressed.

Stepping even further back, there are a whole class of risks with mixed-reality technologies that are not deeply surfaced in this story: computer security risks. This is a topic that Franziska Roesner and I have been studying at UW for about 10 years, along with our colleagues and students. There are a lot of challenges to securing future mixed-reality platforms and applications.

So you would be one of those ideological objectors you mentioned earlier.

YK: I would, yes. And, in addition to issues of access and equity and the various security risks, I used to also be a yoga instructor. I like to see and experience the world through my real senses. I fear that mixed-reality technologies are coming. But for me, personally, I don’t want to lose the ability to experience the world for real, rather than through Goggles.

Who did you have in mind as the primary audience for “Our Reality”?

YK: I had several primary audiences, actually. In a dream world, I would love to see middle school students reading and discussing “Our Reality” in their social studies classes. I would love for the next generation to start discussing issues at the intersection of society and technology before they become technologists. If students discuss these issues in middle school, then maybe it will become second nature for them to always consider the relationship between society and technology, and how technologies can create or perpetuate injustices and inequities.

I would also love for high school and first- and second-year college students to read this story. And, of course, I would love for more senior computer scientists — advanced undergraduate students and people in industry — to read this story, too. I also hope that people read the books that I reference in the Suggested Readings section of my novella and the companion document. Those references are excellent. My novella scratches the surface of important issues, and provides a starting point for deeper considerations; the books that I reference provide much greater detail and depth.

As an educator, I wanted the story to be as accessible as possible, to the broadest audience possible. That’s why I put a free PDF of the novella on my website. I also put a PDF of the companion document on my web page. I wrote the companion document in such a way that I hope it will be useful and educational to people even if they never read the “Our Reality” novella.

What are the main lessons you hope readers will take away from “Our Reality”?

YK: I hope that readers will understand the importance of considering the relationship between society and technology. I hope that readers will understand that it is not inevitable that technologies be created. I hope that readers realize that when we do create a technology, we should do so in a responsible way that fully acknowledges and considers the full range of stakeholders and the present and future relationships between that technology and society.

Also, I tried to be somewhat overt about the flaws in the technologies featured in “Our Reality.” As I said earlier, I intentionally included flaws in the technologies in “Our Reality,” for educational purposes. But when one interacts with a brand new technology in the real world, sometimes there are flaws, but those flaws are not as obvious. I would like to encourage both users and designers of technology to be critical in their consideration of new technologies, so that they can proactively spot those flaws from an equity and justice perspective.

If my story reaches people who have not been thinking about the relationship between society, racism, and technology already, I hope “Our Reality” starts them down the path of learning more. I encourage these readers to look at the “Our Reality” companion document, and explore some of the other resources that I reference. I would like to also thank these readers for caring about such an important topic.

Readers may purchase the paperback or Kindle version of “Our Reality” on Amazon.com, and access a free downloadable PDF of the novella, the companion document, and a full list of resources on the “Our Reality” webpage.